Software Verification in the Google App-Engine Cloud

Abstract

Software verification often requires a large amount of computing resources. In the last years, cloud services emerged as an inexpensive, flexible, and energy-efficient source of computing power. We have investigated if such cloud resources can be used effectively for verification. We chose the platform-as-a-service offer Google App Engine and ported the open-source verification framework CPAchecker to it. We provide our new verification service as a web front-end to users who wish to solve single verification tasks (tutorial usage), and an API for integrating the service into existing verification infrastructures (massively parallel bulk usage). We experimentally evaluate the effectiveness of this service and show that it can be successfully used to offload verification work to the cloud, considerably sparing local verification resources.

Links

- Electronic version (PDF) of paper

- CPAchecker home page

- CPAchecker web interface at the Google App Engine (latest version)

- Version 1.3.2-cav14 of CPAchecker as used in the experiments for the paper (SVN)

Full Results

The full result tables are available here:

Each table shows the results of executing the benchmarks both in the App Engine (with 128 MB heap size), and locally (with 128 MB and 4096 MB heap size). You can click on the cells in the status columns in these tables to see the CPAchecker log file. Clicking on the column headings (e.g., “cputime”) will produce a graph (this requires JavaScript to be enabled). The CSV files contain the same columns in the same order.

Discussion of Speedup

The effective parallelization factor of our experiments when running in the Google App Engine was 25 to 30, although we configured the App Engine to use up to 100 instances in parallel. This was due to queue saturation problems which could be fixed with an improved implementation.

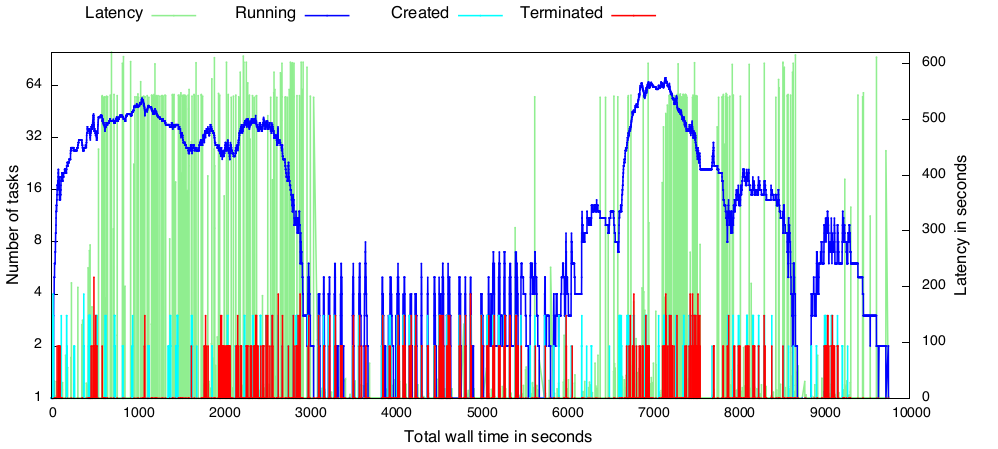

The following graph shows how the benchmarks for the explicit-value analysis were executed over the time:

One can see that in the beginning most of the executed tasks were difficult for the verifier

(the latency, i.e., the time that was necesssary to wait for the result of a given single task,

is near the time limit). At the same time, the number of tasks running simultaneously is high (blue line).

After almost 3000 s, there were more benchmarks executed that are easy for the verifier

(the green line for latency drops to almost zero).

This means that, to keep the instances saturated, the number of tasks started per minute (light-blue line)

and terminated per minute (red line) would need to increase, but both values stay low,

and thus the number of simultaneously running tasks drops to a low value, too.

Later on, there were again more difficult benchmarks in the queue, and thus the instances could get better saturated again.

The reason for this behavior is that our implementation uses the same set of 100 instances for processing the incoming HTTP requests that submit new tasks into the queue as well as for processing the actual verification tasks from the queue. The App Engine tends to assign verification tasks to all available instances, blocking the handling of the task-submission requests and letting the queue run out of tasks. This could be optimized by restructuring our application and reserving a few instances for handling client requests.